How to measure AI support agent performance: 10 key metrics and KPIs

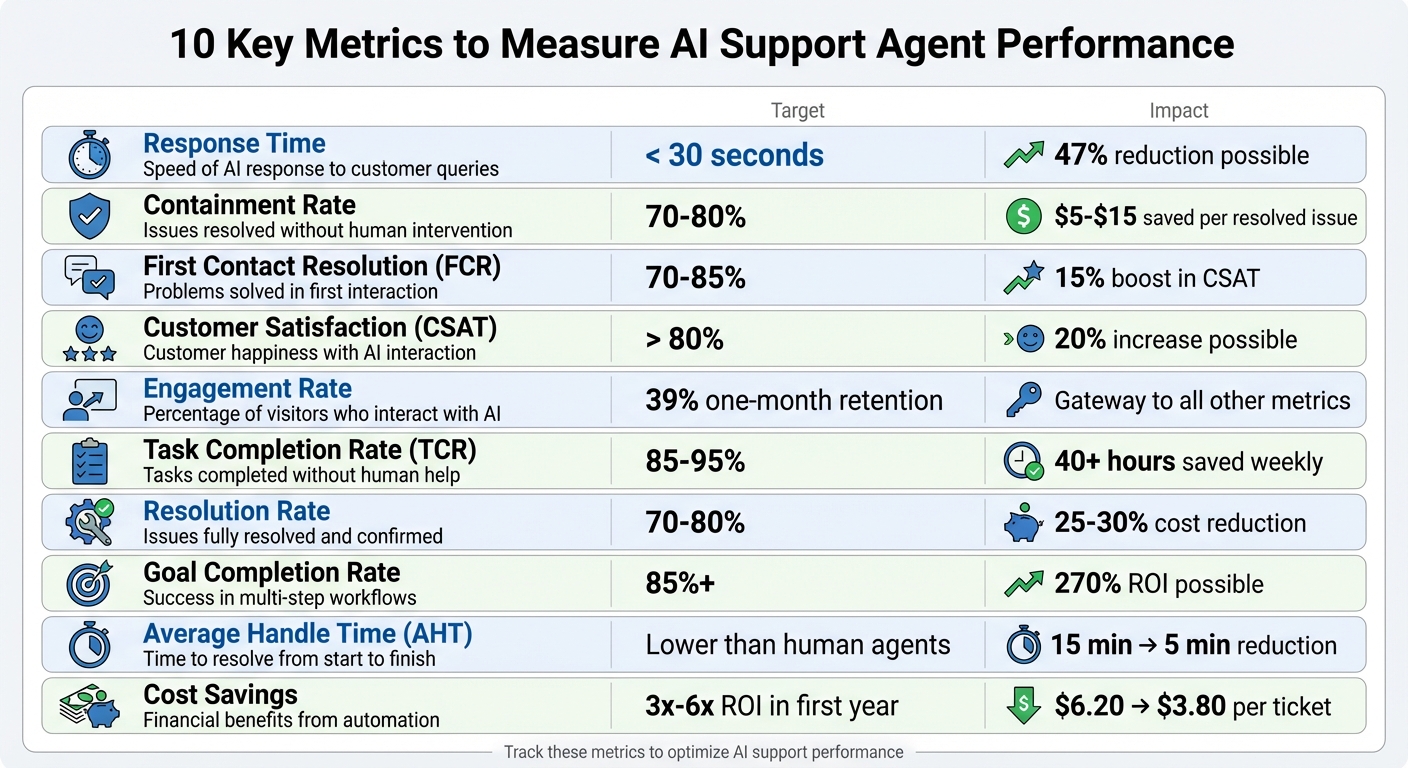

Want to measure how well your AI support agent is performing? Start by tracking the right metrics. Here’s a quick overview of the 10 key metrics that help you understand how effectively your AI system resolves customer issues, improves satisfaction, and reduces costs:

- Response Time: How fast the AI responds to customer queries. Target: under 30 seconds.

- Containment Rate: Percentage of issues resolved without human intervention. Target: 70–80%.

- First Contact Resolution (FCR): Problems solved in the first interaction. Target: 70–85%.

- Customer Satisfaction (CSAT): Measures customer happiness. Target: Above 80%.

- Engagement Rate: Tracks how many users interact with the AI.

- Task Completion Rate (TCR): Successful task completions without human help. Target: 85–95%.

- Resolution Rate: Confirms whether issues are fully solved. Target: 70–80%.

- Goal Completion Rate: Tracks success in guiding users through workflows. Target: 85%+.

- Average Handle Time (AHT): Time spent resolving a ticket. Goal: Faster than human agents.

- Cost Savings: Financial benefits from automation. Goal: 3x–6x ROI in the first year.

Why track these metrics? They show where your AI excels, where it struggles, and how it impacts your bottom line. For example, high containment rates reduce costs, while strong FCR boosts customer loyalty. Pair these metrics with customer feedback to refine your AI system and maximize its value.

10 Key Metrics to Measure AI Support Agent Performance

1. Response Time

Definition and Relevance to AI Support Performance

First Response Time (FRT) tracks how long it takes from when a customer sends a message to when the AI agent delivers its first reply. While speed is a key factor, it’s not the only thing that matters – this metric also reassures customers that their issue is being handled. A quick reply signals attentiveness, whereas delays can make support feel inefficient or indifferent.

Calculation or Measurement Method

FRT is calculated by measuring the time difference between the customer’s inquiry and the AI’s initial reply, then averaging that time across multiple interactions. Beyond averages, it’s helpful to analyze response time distributions. For instance, tracking how many replies happen within 10 seconds versus those taking longer than 30 seconds can uncover patterns or reveal where the AI struggles with complex queries.

Industry Benchmarks or Targets

For AI agents, the standard benchmark is a response time of less than 30 seconds. A good example is iMoving, a logistics company that, in 2025, integrated its AI with live order tracking. This reduced their first response latency by 47%, leading to a noticeable improvement in customer satisfaction during high-demand periods.

“Speed without accuracy creates frustration; accuracy without speed creates abandonment.” – AgentiveAIQ

These benchmarks serve as clear goals for fine-tuning your AI’s response capabilities.

Business Impact and Optimization Potential

Quicker response times are directly tied to higher customer satisfaction (CSAT) scores and better retention rates. AI-powered systems can slash response times by as much as 47%, which not only keeps customers happier but also lowers operational costs. To achieve this, consider integrating your AI with backend systems like CRMs or order tracking platforms. This ensures instant access to relevant data for precise responses. Additionally, monitor metrics like the 95th percentile (P95) latency to address worst-case delays. Implementing Service Level Agreements (SLAs) can also help by triggering alerts when response times exceed acceptable limits.

sbb-itb-58cc2bf

2. Containment Rate

Definition and Relevance to AI Support Performance

The containment rate is a metric that shows the percentage of customer interactions resolved entirely by your AI agent (one of the many ways AI chatbots improve customer self-service), without the need for follow-up through other channels like email, phone, or social media, within a set timeframe. It goes beyond simple deflection, which only measures whether a human agent was avoided during a single session.

The key distinction here is that containment confirms the issue is resolved. A bot might deflect a conversation simply by not offering escalation options, but if the customer later contacts support through another channel, that’s not containment. As Lauren Goerz from Rasa puts it:

“Deflection tells you the AI didn’t hand off to a human. Containment tells you the customer didn’t need additional help.”

Understanding this difference is crucial for evaluating your AI’s performance.

Calculation or Measurement Method

The formula is straightforward: divide the number of AI conversations with no follow-up on the same issue by the total number of business conversations, then multiply by 100. To track this effectively, ensure your systems are integrated and use consistent user IDs across channels.

It’s also important to define a “look-back” period – usually 24 to 48 hours – to monitor for repeat inquiries. This window captures cases where customers initially seem satisfied but later return because their issue wasn’t fully resolved.

Keep an eye on the relationship between containment rates and customer satisfaction (CSAT). High containment rates paired with low CSAT scores could indicate that customers are abandoning the process out of frustration rather than having their problems solved.

Industry Benchmarks or Targets

For modern AI chatbots, a containment rate of 70%–80% is a common goal. However, performance varies widely based on the technology and the complexity of customer inquiries:

- Basic rule-based systems: Typically achieve 20%–40%.

- Advanced AI-powered agents: Can reach 70%–90% with deeper system integrations.

Benchmarks also differ by industry. For example:

- E-commerce: Often achieves 70%–80%, with top performers hitting 89%–92%.

- Banking: Usually sees lower rates (50%–70%) due to regulatory and compliance complexities.

The type of customer intent also plays a role. Straightforward tasks, like tracking an order, often have containment rates of 80%–90%. In contrast, more nuanced issues, such as refund requests, tend to fall between 35%–60%.

Business Impact and Optimization Potential

Every issue resolved by AI instead of a human saves between $5 and $15. For instance, a Canadian telecommunications company saved $13 million in productivity costs by prioritizing containment and true resolution over activity-based metrics.

To improve your containment rate, focus on connecting your AI to backend systems like CRMs and order management platforms. This allows the AI to resolve issues without needing human intervention. Leveraging tools like Retrieval-Augmented Generation (RAG) and systematic error tracking can also help expand your knowledge base and close gaps in your AI’s capabilities.

Another key strategy is tracking “unsupported requests” – these are queries where the AI lacks the necessary information. Use this data to strategically grow your knowledge base. However, aiming for 100% containment isn’t practical or advisable. High-value sales and sensitive issues should always have clear escalation paths to a human agent.

3. First Contact Resolution Rate

Definition and Relevance to AI Support Performance

First Contact Resolution (FCR) refers to the percentage of customer problems your AI agent successfully addresses during the initial interaction, without requiring follow-ups. This metric separates systems that genuinely solve issues from those that merely redirect or delay them. It’s often called a “truth metric” because it highlights whether problems are resolved comprehensively. Bildad Oyugi, Head of Content at Helply, puts it this way:

“A high FCR means your AI is highly effective and creates an effortless experience for customers, which is a huge driver of loyalty.”

Here’s something to think about: 81% of customers attempt to solve their issues independently before reaching out to support. If your AI fails to resolve their problem the first time, it not only wastes their time but also risks eroding their trust in your service.

Calculation or Measurement Method

Once you understand what FCR measures, calculating it is straightforward. Divide the number of cases resolved on the first contact by the total number of cases, then multiply by 100 to get the percentage. To ensure accuracy, track repeat contacts – customers returning within 7–14 days for the same issue. This helps identify cases that seemed resolved but weren’t. Breaking FCR into categories like AI-only resolutions, AI-to-human handoffs, and human-only tickets can uncover where your AI performs well or needs improvement.

For a more precise evaluation, implement binary feedback tools, like asking, “Did this resolve your issue?” immediately after the AI provides a solution. If a conversation covers multiple distinct issues, use metadata tags to track resolution at the issue level.

Industry Benchmarks or Targets

For AI support agents, an FCR rate above 70% is a solid goal. Most high-performing AI systems can resolve 50% to 70% of repetitive Tier 1 issues. Some platforms even promise a 65% resolution rate within 90 days of deployment. Improving FCR can have a direct impact on customer satisfaction, with faster resolutions boosting Customer Satisfaction Scores (CSAT) by up to 15%. And since 90% of consumers consider an immediate response crucial, improving FCR is a no-brainer.

Business Impact and Optimization Potential

The difference between high deflection rates and true resolution has tangible business implications. As EverWorker puts it:

“Optimizing for deflection can create a ‘knowledgeable receptionist’ that explains policies and then escalates, while optimizing for resolution creates an experience where the AI completes the process end-to-end.”

To enhance both first response time and FCR, consider integrating your AI with internal systems like Shopify or your CRM. This allows the AI to handle actions – such as processing refunds or resetting passwords – rather than merely providing instructions. Use analytics to identify where users abandon interactions or repeatedly prompt the system, as these behaviors often indicate gaps in your AI’s knowledge base. Testing AI against historical tickets can also reveal resolution rates and failure points.

Tracking FCR alongside other metrics, like containment rate, ensures you’re not just deflecting issues but genuinely resolving them. When escalations are necessary, make sure your AI passes full context to human agents, enabling them to resolve the issue in a single interaction. This “one-touch human resolution” approach not only saves time but also enhances the overall customer experience.

4. Customer Satisfaction Score (CSAT)

Definition and Relevance to AI Support Performance

While efficiency metrics like response time are vital, CSAT measures the quality of customer interactions. It gauges how satisfied users are after a specific AI interaction. Unlike metrics that focus on speed or ticket deflection, CSAT captures customer “happiness” and indicates whether they trust your AI assistant. This is crucial because an AI bot might meet automation goals while still leaving users frustrated. As Lauren Goerz from Rasa puts it:

“CSAT is our primary optimization metric because it directly reflects AI interaction quality.”

In fact, 73% of customers believe AI improves service, and 80% report positive interactions. CSAT complements operational metrics by offering insights into customer trust and satisfaction with AI-driven support.

Calculation or Measurement Method

To calculate CSAT, divide the number of positive responses (typically ratings of 4 or 5 on a 5-point scale) by the total number of survey responses, then multiply by 100. Surveys should be sent immediately after each AI interaction to capture fresh feedback.

For deeper insights, segment your CSAT into three categories:

- AI-only resolutions

- AI-to-human handoffs

- Human-only tickets

This segmentation helps pinpoint where your AI excels and where human agents are driving satisfaction. Always include a comment box in the survey. While the numeric score tells you what customers think, the comments reveal why they feel that way.

Industry Benchmarks or Targets

For AI-driven support systems, aim for a CSAT score above 80% to reflect strong performance. Most benchmarks for AI support range between 65% and 85%. High CSAT scores confirm that your AI is not just efficient but also effective at resolving customer concerns. Companies using AI support have reported up to a 20% increase in CSAT scores, while faster response times driven by AI can boost CSAT by as much as 15%. Additionally, 90% of consumers prioritize an immediate response when seeking customer service.

Business Impact and Optimization Potential

CSAT is a powerful tool for identifying areas where your AI needs improvement. Unlike broader metrics like NPS, CSAT pinpoints specific workflows – like refunds or password resets – that may be underperforming. A drop in CSAT can signal knowledge gaps or outdated training data, allowing you to make quick adjustments.

Pay close attention to CSAT scores during AI-to-human handoffs. If customers frequently report frustration, it might indicate issues like context loss or the need to repeat information during the transfer. For consistently low CSAT on certain topics, tweak the AI’s dialogue or update its knowledge base to address those issues.

The ultimate goal isn’t just automating tasks – it’s creating a support experience where customers feel genuinely helped. As Bildad Oyugi from Helply highlights:

“If your AI is frequently wrong, customers will lose confidence in it and your brand.”

5. Engagement Rate

Once you’ve assessed response time, containment, and first contact resolution, it’s time to look at engagement rate. This metric reveals how actively visitors interact with your AI support agent – an important factor in evaluating its effectiveness.

Definition and Relevance to AI Support Performance

Engagement rate tracks the percentage of visitors who initiate a conversation with your AI support agent. It reflects both how aware users are of the agent and how much value they believe it provides. Think of it as a gateway metric: if users aren’t engaging, other metrics like resolution rate or cost savings become irrelevant.

Calculation or Measurement Method

To calculate engagement rate, divide the number of interactions started by the total number of visitors, then multiply by 100. For example, if 500 out of 10,000 visitors interact with your AI agent, the engagement rate is 5%.

However, not all interactions are equal. Filter out non-genuine conversations such as test messages, spam, or simple greetings like “hi” or “test.” Instead, focus on meaningful “business conversations” where users seek help with product questions or requests like refunds.

For a clearer picture, break down engagement data by user type – like new versus returning users or free versus paid accounts.

Industry Benchmarks or Targets

While engagement benchmarks vary, the average one-month retention rate for AI agents is around 39%. This means roughly four out of ten users who engage once will return within a month.

Keep an eye out for situations where high visitor numbers don’t lead to meaningful interactions – often referred to as “digital tumbleweeds”. On the flip side, remember that 90% of consumers expect immediate responses when seeking customer service, which often motivates them to engage with AI agents. These benchmarks not only help set performance goals but also highlight areas for improvement.

Business Impact and Improvement Opportunities

Engagement rate directly affects costs, as many AI services charge based on API calls or token usage. High engagement without effective resolutions can indicate a problem – users may try the bot once and leave if it doesn’t meet their needs.

To boost engagement, consider placing your chatbot on high-traffic pages and using proactive triggers to start conversations. Tracking engagement by specific issues (e.g., password resets versus refund requests) can also provide more actionable insights. Additionally, keep an eye out for “rage prompting”, where users type in ALL CAPS or use profanity. High engagement doesn’t always mean users are satisfied.

For more tips on tracking AI support agent performance, check out our guide on the Quidget blog.

6. Task Completion Rate

Definition and Relevance to AI Support Performance

Task Completion Rate (TCR) reflects the percentage of tasks your AI agent completes without requiring human intervention. It’s calculated by dividing the number of successfully completed tasks by the total tasks assigned and multiplying the result by 100.

This metric highlights whether your AI agent is capable of independently handling tasks. While engagement rate measures user interaction, TCR dives deeper, revealing whether those interactions result in tangible outcomes – like resetting a password or processing a refund. Alongside metrics like first response time and containment rate, TCR provides a clear picture of how effectively your AI resolves issues.

Calculation or Measurement Method

For accurate insights, track TCR at the task level rather than the conversation level. A single interaction could involve multiple tasks – such as checking an order status followed by updating account information – so evaluating individual workflows offers more precise data.

Exclude irrelevant interactions like greetings, tests, or spam from your calculations. This ensures you’re focusing only on meaningful, business-related conversations.

Once you establish a consistent calculation method, use benchmarks to set realistic performance goals.

Industry Benchmarks or Targets

An effective AI agent should aim for a TCR above 85%. Well-optimized systems typically achieve rates between 85% and 95% for structured tasks. Top-performing AI can even surpass 90% automation while maintaining high customer satisfaction.

For example, the pet retailer Fressnapf achieved an impressive 99.7% automation rate, meaning just 0.3% of conversations required human intervention. Looking ahead, Gartner forecasts that by 2029, AI agents will resolve 80% of common customer service issues autonomously.

Business Impact and Optimization Potential

TCR directly influences cost savings. Automating 75% of support queries can save teams over 40 hours of work each week. With 85% of data and IT leaders under pressure to demonstrate the return on investment (ROI) of generative AI, TCR serves as a critical metric to justify AI expenditures.

To optimize, monitor where users drop off in multi-step workflows and pair TCR data with customer satisfaction (CSAT) scores. After all, a high TCR is only valuable if it aligns with a positive customer experience.

7. Resolution Rate

After task completion rate, resolution rate offers another crucial perspective on how well AI support systems handle customer issues.

Definition and Relevance to AI Support Performance

Resolution rate reflects the percentage of customer inquiries that an AI agent resolves entirely without needing human intervention. Unlike deflection rate – which only indicates that human assistance was avoided – resolution rate confirms that the issue was fully resolved.

This metric is particularly important for gauging AI support effectiveness. As Lauren Goerz from Rasa puts it:

“Solution rate… is my north star metric because it’s the only metric that requires customer confirmation that their problem was solved”.

A high deflection rate combined with a low resolution rate could signal that your AI avoids human involvement but fails to resolve issues effectively.

To fine-tune AI workflows, track each issue individually to identify strengths and areas needing improvement.

Calculation or Measurement Method

To calculate resolution rate, you can use the formula:

(AI-resolved inquiries ÷ Total inquiries) × 100.

Alternatively, for more accuracy:

(Confirmed solutions ÷ Total conversations with feedback) × 100.

Gather immediate binary feedback (e.g., “Was your issue resolved? Yes/No”) to validate results. For added accuracy, verify outcomes like confirming a refund was processed. Exclude irrelevant conversations – such as greetings, spam, or test traffic – to ensure your numbers aren’t skewed. Regularly review a sample of “resolved” conversations to confirm the issues were genuinely addressed .

These methods help benchmark your AI’s resolution rate against industry standards.

Industry Benchmarks or Targets

AI systems designed for repetitive Tier 1 support tasks generally resolve 50–70% of inquiries. Advanced, knowledge-based AI can achieve resolution rates of 70–80%, with some reporting an average of 66.3%. Cutting-edge systems have reached up to 80% resolution without human assistance. For voice AI, first call resolution benchmarks typically range from 70–85%, with top performers exceeding 80%. Additionally, aim for a task completion rate over 85% and response accuracy above 90%.

Business Impact and Optimization Potential

Achieving a high resolution rate can significantly reduce support costs – by as much as 25–30% – and often correlates with a 20% boost in customer satisfaction scores . Like task completion and containment rates, resolution rate confirms that your AI is effectively solving customer problems.

To improve resolution rates, analyze unresolved inquiries to pinpoint knowledge gaps and update your knowledge base. Use tools like Knowledge Bridges to sync your AI with live help centers and FAQs in real time. Start by automating high-volume repetitive tasks, such as order tracking or password resets, before moving on to more complex workflows.

It’s also essential to monitor resolution rate alongside customer satisfaction (CSAT) scores. As Ameya Deshmukh from EverWorker explains:

“Optimizing for deflection can create a ‘knowledgeable receptionist’ that explains policies and then escalates, while optimizing for resolution creates an experience where the AI completes the process end-to-end”.

The ultimate goal is to ensure your AI doesn’t just sidestep escalations but truly resolves customer issues.

8. Goal Completion Rate

Definition and Relevance to AI Support Performance

Goal completion rate is a key metric that evaluates how effectively an AI support agent guides users through multi-step workflows to successfully complete specific tasks. These workflows might involve filing an insurance claim, resetting a password, or processing a refund. It goes beyond metrics like deflection rate by confirming that the AI not only avoided human intervention but also completed the intended task from start to finish.

As Evgen Balter, CEO of Altamira, explains:

“Smart business process decomposition enables your organization to identify KPIs and observability solutions that will allow you to see, manage, and optimize the performance of AI agent.”

To get the clearest picture, it’s best to track performance at the issue level rather than the conversation level. For instance, by tagging each step – like when a refund request starts and when it’s completed – you can pinpoint which workflows are effective and which require adjustments.

Calculation or Measurement Method

The formula for calculating goal completion rate is straightforward:

(Completed workflows ÷ Workflows started) × 100.

When measuring, focus on genuine business interactions. Exclude casual greetings, test queries, or accidental clicks to ensure accuracy. Also, make sure that a “completion” reflects the successful resolution of the task, not just the conclusion of a pre-scripted interaction.

Industry Benchmarks or Targets

Top-performing AI systems often achieve goal completion rates above 85%. For structured tasks, many systems report autonomous completion rates between 85% and 95%. Studies show that 68% of production AI agents require human intervention in fewer than 10 steps, underscoring the importance of refining workflows.

For example, in 2024, JPMorgan Chase automated tasks that previously required manual effort, saving 360,000 hours annually and generating approximately $20 million in value. Similarly, the Cleveland Clinic used AI to optimize care pathways, reducing patient stay lengths by 30% and achieving a 270% ROI. These examples highlight how goal completion rate can directly impact efficiency and profitability.

Business Impact and Optimization Potential

Tracking goal completion rate alongside customer satisfaction (CSAT) is critical. If high completion rates coincide with low CSAT, it could indicate that the process feels cumbersome or frustrating for users. Be alert for “rage prompting”, where users repeatedly rephrase questions or express frustration – this often signals broken workflows.

Additionally, compare the AI’s performance with traditional click-based processes. If your conventional UI achieves better conversion rates, it might be time to rethink your AI workflows. Reviewing unsupported request metrics can also reveal tasks users expect the AI to handle but currently cannot, providing valuable insights for future improvements.

9. Average Handle Time

Definition and Relevance to AI Support Performance

Average Handle Time (AHT) measures the average time an agent spends resolving a ticket – from the moment it’s opened to its resolution. It’s a balancing act: a lower AHT indicates faster resolutions but must not come at the cost of increased customer effort or reduced quality.

“AHT is optimized at the expense of effort. Rushing customers through scripted flows can lower handle time while increasing repeat contacts and effort”.

For AI-assisted agents, AHT is reduced by automating tasks like researching, summarizing conversation histories, or drafting responses in seconds. In fully autonomous AI setups, AHT reflects the time from the user’s initial query to the successful completion of the task.

Calculation or Measurement Method

In autonomous AI scenarios, AHT is calculated by dividing the total time spent on successful tasks (from the first prompt to the final action) by the number of those tasks. For AI-assisted human agents, the formula remains:

(Total talk time + hold time + wrap-up time) ÷ number of resolved tickets.

Using median times helps eliminate outliers, such as when a user opens a chat but leaves before engaging. It’s also vital to track AHT separately for AI-only resolutions, AI-to-human handoffs, and human-only tickets. This breakdown identifies where AI adds efficiency or creates bottlenecks.

Comparing AHT in AI workflows versus traditional methods reveals areas where the AI might need fine-tuning. These insights are crucial for aligning with industry standards.

Industry Benchmarks or Targets

AI has the potential to significantly lower AHT – from 15 minutes to as little as 5 minutes – reducing the cost per resolution from $10 to around $2–$3. By streamlining processes, AI-driven support can cut overall service costs by up to 30%, while boosting human agent productivity by 20–35%. With 85% of IT and data leaders under pressure to demonstrate ROI for generative AI, metrics like AHT are essential for justifying these investments.

Business Impact and Optimization Potential

To truly measure the impact of AI on support performance, AHT should be evaluated alongside other metrics like CSAT. This ensures that faster resolutions don’t come at the expense of service quality. For a more detailed analysis, measure AHT at the issue level – comparing workflows for simpler tasks, like password resets, to more complex ones, like refund requests. Exclude irrelevant interactions to prevent skewing the results.

10. Cost Savings

Definition and Relevance to AI Support Performance

Tracking cost savings is just as important as monitoring metrics like CSAT and resolution rates. Why? Because it directly showcases the financial benefits of automation. Cost savings measure how much money your business saves by comparing operational expenses before and after implementing AI support. It highlights the financial impact of automating tasks, cutting labor costs, and improving operational efficiency.

AI agents help save money in three key areas: reducing labor costs (by taking over tasks that used to require human agents), boosting operational efficiency (saving time on manual tasks like data entry), and preventing errors (avoiding expensive mistakes such as LLM hallucinations, which are projected to cost businesses over $67 billion in 2024). These savings help determine if your AI investment is paying off.

Calculation or Measurement Method

So, how do you calculate cost savings? One simple approach is to focus on labor cost reduction. For instance, if your AI takes over tasks previously handled by three full-time employees, the savings equal their combined salaries.

For a more detailed analysis, calculate Cost Per Ticket (CPT). Divide your total AI-related costs (including infrastructure, API usage, and oversight) by the number of resolved tickets. Then compare this to your historical CPT when only humans were involved. For example, one retail company cut its ticket cost from $6.20 to $3.80 in just four months by achieving a 78% autonomous resolution rate.

You can also measure overall ROI using this formula: (Net Benefits ÷ Total Investment) × 100. Net benefits include cost savings, revenue gains, and risk reduction, minus all implementation costs like development, training, and maintenance.

Another key metric is unit economics. If the cost per successful AI session (e.g., $2.00) is higher than a human session (e.g., $1.50), the setup isn’t financially viable.

Industry Benchmarks or Targets

AI adoption in customer service can slash costs by up to 25%. On average, enterprise AI agents deliver 3x to 6x ROI within their first year, with customer service automation typically reaching a 4.2x ROI. Advanced AI systems can autonomously resolve up to 80% of queries, significantly lowering ticket costs.

However, challenges remain. Around 95% of generative AI pilots fail to deliver measurable financial returns, often due to poor performance tracking. Success rates vary based on the implementation strategy – off-the-shelf AI tools have a 67% success rate compared to just 22% for in-house builds.

Business Impact and Optimization Potential

To maximize cost savings, focus on value-driven metrics like cost per ticket, AI first response time, and autonomous resolution rate instead of just activity-based metrics like chat volume. Start by establishing a baseline – track metrics like average first response time, CSAT, and cost per ticket before deploying AI. This helps you measure the “before and after” impact more accurately.

Keep an eye on token usage per session to avoid excessive costs that can double operational expenses. Pay special attention to “one-touch” resolutions, where a ticket is resolved with a single AI response, as these offer the highest cost-saving potential. However, remember that time savings only become real financial savings if those hours are redirected to higher-value tasks or result in reduced headcount.

Balancing efficiency with quality is also critical. Organizations that focus solely on efficiency metrics often see a 23% rise in rework costs, while those that balance efficiency and quality achieve 15% better overall performance. With 85% of IT and analytics leaders under pressure from the C-suite to prove generative AI ROI, tracking cost savings is essential for evaluating the financial success of your AI investment.

Want to simplify this process? Tools like Quidget’s built-in analytics can help you monitor cost-saving metrics in real time.

Comparison Table

Quick reference for tracking key AI support metrics.

| Metric | Measures | Target | How to Calculate | How Quidget Tracks It |

|---|---|---|---|---|

| Response Time | Time from query to first meaningful reply | < 2 seconds | First reply time divided by resolved tickets | Automatically logs time from user submission to AI response via model latency tracking |

| Containment Rate | Issues resolved without customer follow-up on other channels | > 70% | AI conversations with no follow-up divided by total AI conversations, times 100 | Cross-channel integration monitors if customers return within 24-48 hours via any support channel |

| First Contact Resolution (FCR) | Issues resolved in a single interaction | 70–85% | One-touch tickets divided by total tickets received, times 100 | Identifies sessions that don’t result in a second contact or escalation to human agents |

| Customer Satisfaction (CSAT) | Customer happiness with AI interaction | > 80% | Positive responses divided by total responses, times 100 | Post-interaction surveys using a 1-5 or 1-7 scale, segmented by AI-only vs. AI-to-human handoffs |

| Engagement Rate | Depth of user interaction with the AI | High prompts per user ratio | Total prompts divided by total unique visitors | Segmented by user type for clearer insights |

| Task Completion Rate | Tasks finished without human intervention | 85–95% | Successful tasks divided by total tasks assigned, times 100 | Workflow completion triggers and autonomous execution logs track multi-step processes |

| Resolution Rate | Conversations where customer confirms problem was solved | 72%+ | Confirmed solutions divided by total feedback responses, times 100 | Binary user feedback (Thumbs Up/Down) collected immediately after interaction |

| Goal Completion Rate | Success in achieving intended business outcomes | 85%+ | Successful goal completions divided by total goal attempts, times 100 | Compares final agent output against predefined success parameters and conversion events |

| Average Handle Time (AHT) | Duration of AI-assisted interaction from start to finish | Lower than human AHT | Total handling time divided by total interactions | Tracks time from first user prompt to final successful action or closure |

| Cost Savings | Financial benefit from AI automation vs. human-handled tickets | 3x–6x ROI within first year | Compare labor cost savings with AI costs per successful ticket | Divides total token/API costs by successful goal completions to calculate cost per ticket |

This table serves as a handy summary of key performance metrics, offering a snapshot of how to measure and track AI support results effectively. Pairing related metrics can reveal deeper insights into performance trends. For example, combining First Contact Resolution with Containment Rate can highlight how well the AI handles issues independently.

Start by benchmarking these metrics against your human agent data. If your human team achieves a 75% FCR, aim for your AI to match or exceed that within the first quarter. For a more detailed view, analyze performance at the issue level, not just the conversation level. Customers often raise multiple questions in one interaction, and breaking these down can help identify workflows that need refinement or additional training.

Conclusion

Measuring the performance of AI support agents goes beyond crunching numbers – it’s about demonstrating real business impact. Surprisingly, over 50% of AI support projects fail, not because the technology falters, but because companies struggle to measure and improve what truly matters. The ten metrics discussed here provide a clear framework to help you move past guesswork and present leadership with tangible evidence of AI’s effect on your bottom line. This approach helps identify where your AI support systems excel and where they need fine-tuning.

Consider this: companies typically earn $3.50 for every $1 invested in AI, with some achieving returns as high as $8. On the cost side, human-handled tickets range from $5–$12, while AI-resolved tickets cost just $1–$2. Yet, 80% of companies report limited financial gains from AI initiatives due to poor success measurement.

Start by setting a baseline before introducing AI. Document metrics like your current cost per ticket, average handle time, and customer satisfaction (CSAT) scores. Once AI is in place, break your data into three groups: AI-only resolutions, AI-to-human handoffs, and human-only tickets. This segmentation highlights where AI delivers value and where it needs improvement. These insights provide a roadmap for refining your AI support strategy.

Remember, the goal isn’t perfection from day one. Instead, focus on creating a feedback loop where each metric drives continuous improvement. For instance, if high deflection rates are paired with low CSAT scores, it signals that customers might be hitting roadblocks rather than receiving effective support. Similarly, a drop in first contact resolution for specific issues points to areas where training or knowledge base updates are needed.

Ready to take the next step? Try Quidget free to track these essential metrics and discover how data-driven insights can revolutionize your customer support.

FAQs

What’s the difference between containment and deflection?

Containment and deflection are two metrics used to evaluate AI support agents, each focusing on different aspects of performance. Containment refers to the percentage of issues the AI resolves completely on its own, without requiring follow-ups or human involvement. In contrast, deflection measures how many interactions the AI manages without escalating them to a human agent. While containment emphasizes the quality of issue resolution, deflection reflects how well the AI minimizes the workload for human agents.

How do I set a baseline before launching an AI support agent?

To get started, focus on identifying core metrics such as deflection rate, containment rate, solution rate, and customer satisfaction (CSAT). These will help you gauge your current performance. Dive into existing data, like response times and resolution rates, to create a solid benchmark for comparison. Make sure to document these metrics carefully – this will be crucial for tracking progress and assessing how the AI agent influences performance after it’s implemented.

How do I calculate ROI for an AI support agent?

To figure out the ROI of an AI support agent, focus on key metrics like ticket deflection rate, first response time, resolution rate, and customer satisfaction scores (CSAT). Weigh the savings from fewer support tickets and quicker resolutions against the costs of implementing and maintaining the system. If you see high deflection rates and better CSAT scores, it’s a good sign that the system is working efficiently, easing the workload, and improving the overall customer experience – making the investment worthwhile.